AI 'doctors' under fire: Pennsylvania sues Character.AI over alleged medical claims

State seeks court order to stop chatbots from posing as licensed professionals

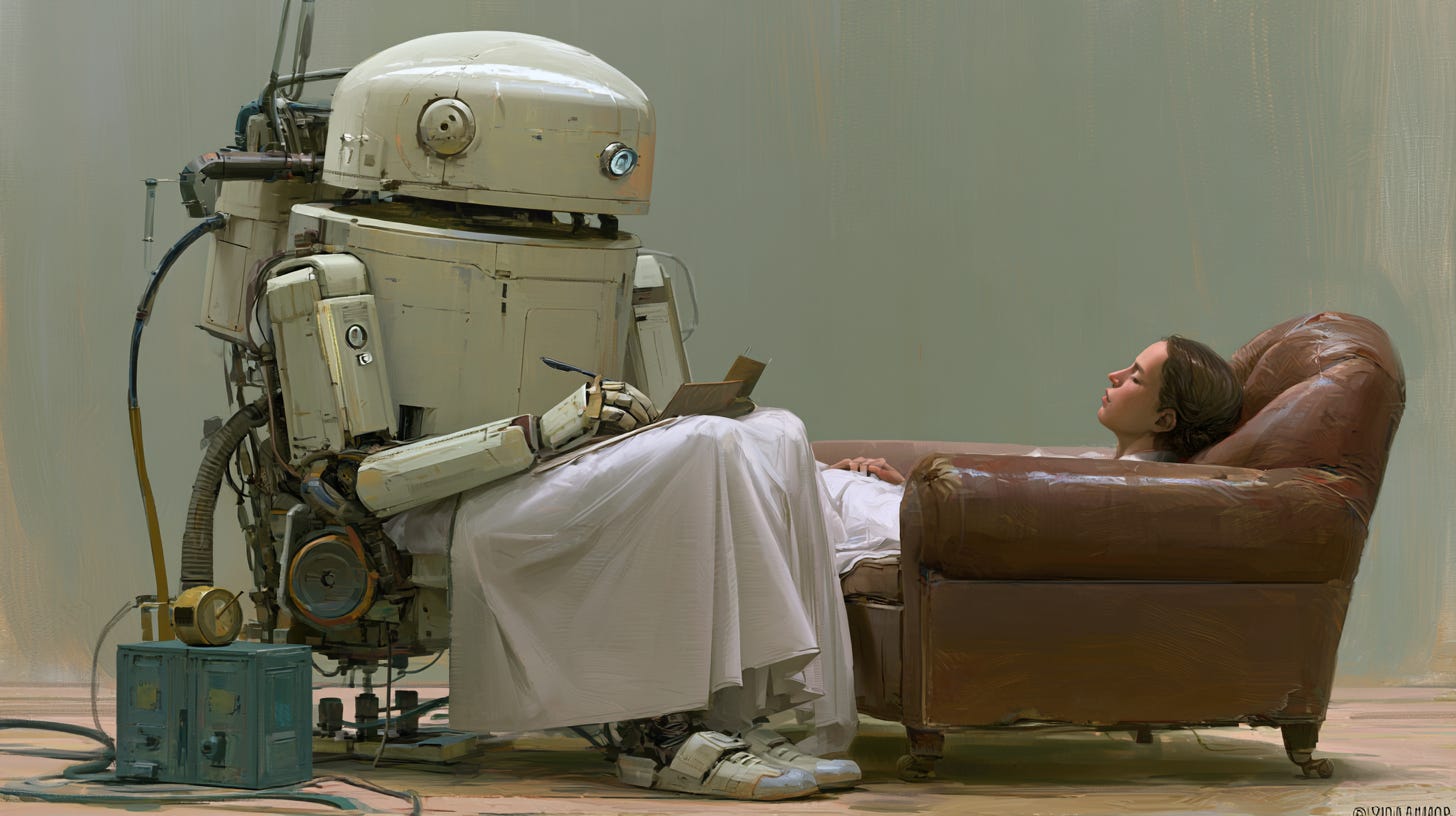

Officials say bots on Character.AI falsely presented themselves as licensed doctors, including psychiatrists

The Shapiro administration has sued Character Technologies, Inc., alleging its AI companion bots on Character.AI misled users into believing they were interacting with licensed medical professionals.

According to the complaint, some chatbot “characters” claimed to be psychiatrists and even said they were licensed in Pennsylvania—at times providing invalid license numbers while discussing mental health symptoms with users.

Governor Josh Shapiro said the practice crosses a clear line: people must know “who — or what — they are interacting with online, especially when it comes to their health.”

The state is seeking a preliminary injunction to halt the behavior while the case proceeds.

The company defended itself in an email to The Outraged Consumer.

“Our highest priority is the safety and well-being of our users, a spokesperson said.“ The user-created Characters on our site are fictional and intended for entertainment and roleplaying. We have taken robust steps to make that clear, including prominent disclaimers in every chat to remind users that a Character is not a real person and that everything a Character says should be treated as fiction. Also, we add robust disclaimers making it clear that users should not rely on Characters for any type of professional advice.”

Why it matters

At the core of the lawsuit is Pennsylvania’s Medical Practice Act, which bars anyone from presenting themselves as a licensed medical professional without proper credentials.

State officials argue that AI tools blur that boundary — especially when platforms allow users to create custom chatbot “characters” that can mimic professionals.

Secretary of State Al Schmidt said the law applies regardless of the technology: “You cannot hold yourself out as a licensed medical professional without proper credentials.”

What the state found

The Department of State’s investigation concluded that:

Some AI bots explicitly claimed professional credentials

At least one chatbot said it was licensed in Pennsylvania and supplied a fake license number

The platform enables users to create bots that can present as doctors or therapists

The lawsuit alleges this amounts to the unauthorized practice of medicine.

What the site says

On its website, Character.AI says it “empowers people to connect, learn, and tell stories through interactive entertainment.” It claims that, “Millions of people visit Character.AI every month, using our technology to supercharge their imaginations.”

The home page displays a revolving series of dramatic photos. Though abstract, the photos seem to illustrate people in trouble. Some display people apparently standing on the ledge of a tall building.

Such photos are strongly discouraged by media and healthcare organizations. A commonly used media training guide states:

“Avoid using dramatic… images such as a person standing on a ledge”

Bigger crackdown on AI “companions”

The case is part of a broader push by Pennsylvania to regulate AI tools that interact directly with consumers.

Recent steps include:

Launching an AI Literacy Toolkit (nearly 3,000 uses so far)

Creating an AI Enforcement Task Force to investigate complaints

Setting up a reporting system for suspected unlawful AI behavior

The administration has also coordinated with the state attorney general and held public roundtables on AI risks.

What could change next

Shapiro’s proposed 2026–27 budget calls for new consumer protections targeting AI companion bots, including:

Age verification and parental consent requirements

Mandatory alerts when bots detect self-harm or violence risks

Regular reminders that users are not interacting with a human

A ban on sexual or violent content involving minors

What this means for consumers

This case signals a growing regulatory focus on AI systems that simulate human expertise — especially in high-risk areas like health.

For users, the takeaway is simple:

AI chatbots are not licensed professionals, even if they sound convincing

Medical advice should come from verified, real-world providers

Misleading AI claims may soon face tighter legal consequences nationwide

Bottom line

Pennsylvania’s lawsuit could set a precedent for how states police AI “companion” platforms—drawing a hard line between helpful tools and illegal impersonation of professionals.