Designing addiction? FTC zeroes in on how platforms hook kids

“Dark patterns” and algorithmic nudges face growing scrutiny in youth safety reviews

A new front in the fight over kids’ online safety

The Federal Trade Commission is quietly opening a new front in its oversight of Big Tech—one that could have sweeping implications for how apps and platforms are built for children.

Republican Commissioner Mark Meador said Monday that while the FTC isn’t interested in “telling companies how to run their businesses,” the agency will continue to police online hazards facing children and adults, including those that may be caused by the way that websites are designed, and could impose more “extreme” remedies when necessary,

He said that rather than focusing solely on harmful content or data collection, regulators are increasingly zeroing in on platform design itself—the features, defaults, and algorithms that shape how young users behave online.

At issue: whether some of the most common elements of modern apps—endless scroll, autoplay videos, push notifications, and engagement streaks—are intentionally engineered to keep kids hooked.

From content moderation to behavioral design

For years, tech oversight has centered on what users see: misinformation, harmful content, or inappropriate material for minors.

Now, regulators are asking a different question:

Are platforms designed to maximize compulsive use among children—even when companies claim their products are safe?

This shift reflects a broader regulatory concern with so-called “dark patterns”—interfaces that subtly steer users toward certain behaviors, often without their full awareness.

In the case of children, critics argue, those design choices can:

Encourage longer screen time through infinite feeds

Use rewards and streaks to build habitual use

Nudge kids to share more personal data

Blur the line between content and advertising

The legal hook: unfair and deceptive practices

The FTC’s authority comes from its mandate to police unfair or deceptive practices—a framework that may be increasingly applied to design itself.

That could mean:

Challenging platforms that claim to protect kids while deploying highly addictive features

Scrutinizing default settings that favor data collection or engagement

Investigating whether companies knowingly optimize for youth retention at the expense of well-being

The agency is also in the midst of updating rules under the Children’s Online Privacy Protection Act, signaling a broader interpretation that could extend beyond data collection into how platforms influence children’s behavior.

A growing “safety-by-design” movement

The FTC’s emerging focus mirrors a global regulatory trend: safety by design.

Rather than reacting to harms after they occur, regulators want platforms to build in protections from the start. That could include:

Default settings that limit data sharing for minors

Restrictions on algorithmic recommendations for younger users

Fewer push notifications and engagement prompts

Clearer separation between ads and organic content

Similar approaches are already taking shape in Europe and the U.K., raising the possibility of cross-border pressure on U.S. tech companies.

Industry on notice

For social media, gaming, and streaming platforms, the implications could be significant.

Features long considered standard—autoplay, infinite scroll, and gamified engagement loops—may soon face regulatory scrutiny if they are shown to disproportionately affect minors.

Companies could be forced to:

Redesign onboarding flows for younger users

Offer age-specific versions with reduced engagement mechanics

Provide more transparency into how recommendation systems work

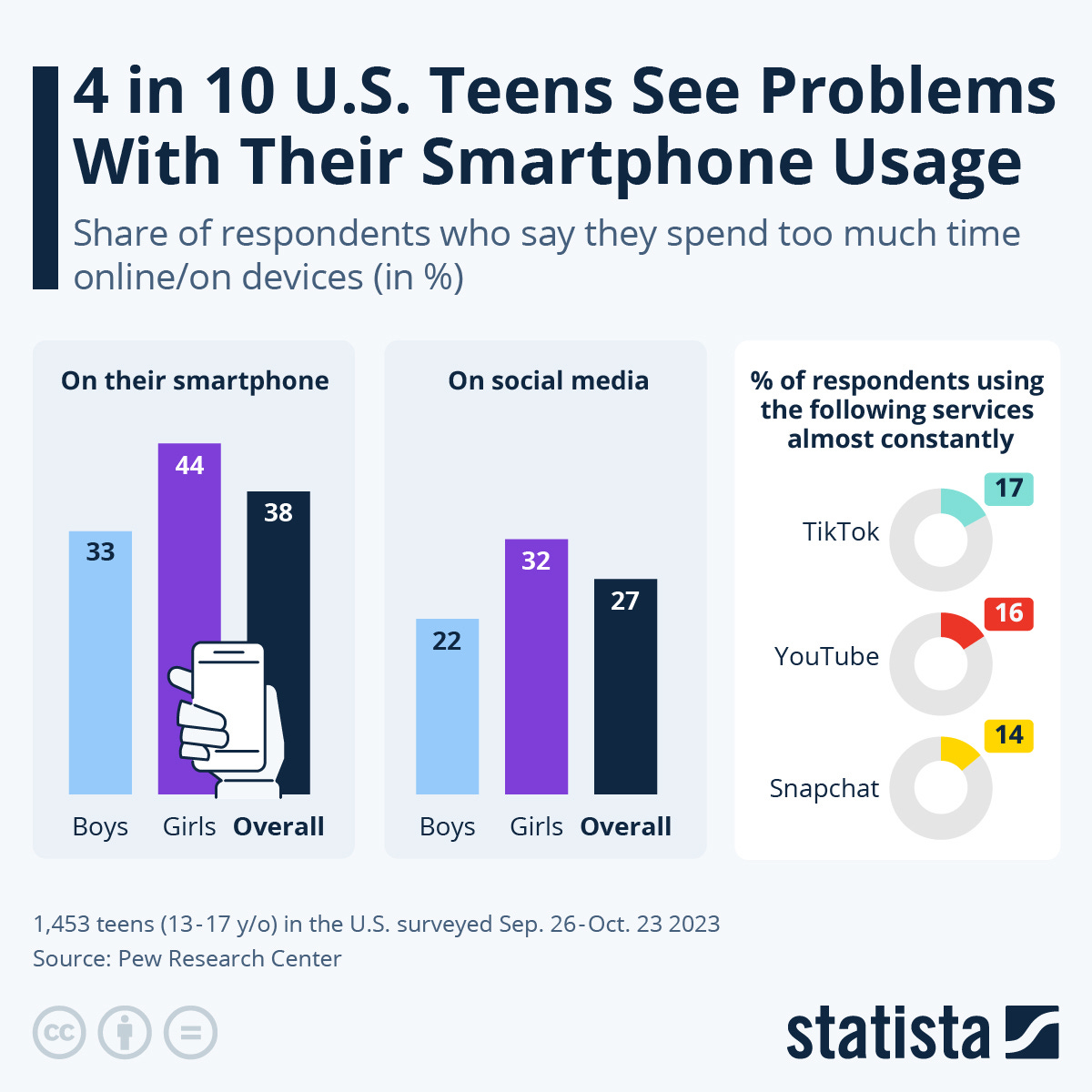

The backdrop: mounting evidence on youth harm

The FTC’s interest comes amid growing legal and scientific pressure on tech platforms.

Recent lawsuits and state-level actions have accused companies of contributing to:

Anxiety and depression among teens

Sleep disruption tied to late-night app use

Exposure to harmful or addictive content loops

Internal research from several companies—revealed in past investigations—has also suggested awareness of these risks, intensifying calls for accountability.

What this means

For parents:

The debate is shifting from what kids see to how apps are built to keep them engaged—a harder problem to detect and manage at home.

For consumers:

Expect more transparency demands around app design, especially features that encourage prolonged use or data sharing.

For tech companies:

The era of “engagement at all costs” may be ending—at least where minors are concerned.

The bottom line

The FTC’s evolving focus signals a fundamental rethink of digital consumer protection:

It’s no longer just about harmful content

It’s about whether platforms are engineered to be addictive—especially for kids

And if regulators decide the answer is yes, the next wave of enforcement may target not what platforms say—but how they’re built.