Tesla's self-driving system getting a closer look from federal safety regulators

NHTSA escalates its probe into the final stage before a recall could be ordered

Federal auto safety regulators have escalated their investigation into Tesla’s “Full Self-Driving” (FSD) system, deepening scrutiny of a technology that has long been marketed as the future of driving but remains at the center of mounting safety concerns.

The National Highway Traffic Safety Administration (NHTSA) said it has moved its probe into an “engineering analysis,” the final stage before regulators decide whether a safety defect exists—and whether a recall is warranted, Reuters reported.

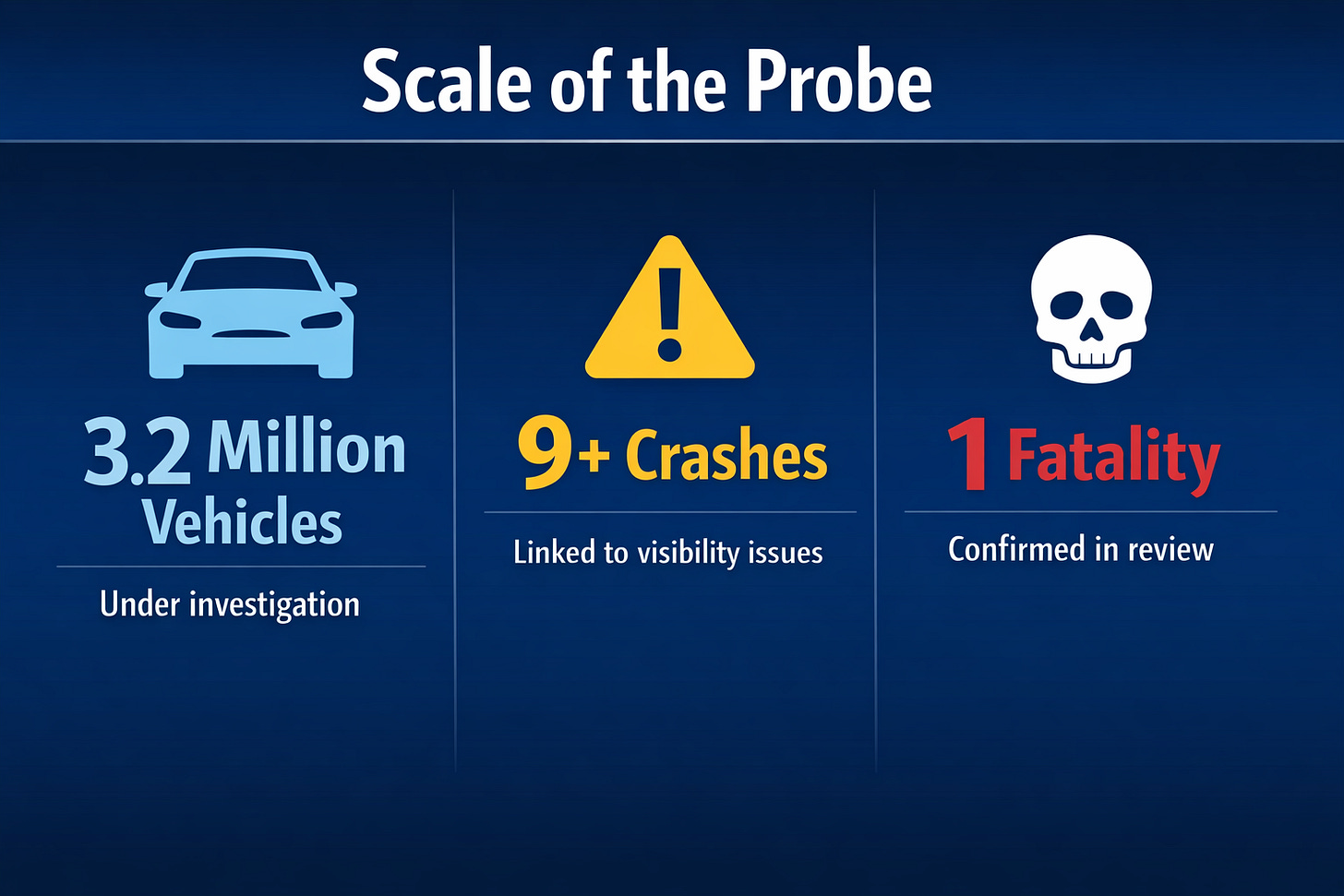

The upgraded investigation now covers approximately 3.2 million Tesla vehicles equipped with the company’s driver-assistance software.

From curiosity to concern

NHTSA investigations typically follow a predictable path: initial complaints, a preliminary evaluation, and—only in more serious cases—an engineering analysis.

This latest move signals that regulators believe the risks may be systemic.

At issue is how Tesla’s system performs when visibility is compromised, including:

Sun glare

Fog and dust

Smoke or airborne particles

In these situations, regulators are examining whether Tesla’s camera-based system can reliably detect hazards—and whether drivers are given enough warning to intervene.

Unlike many competitors, Tesla relies primarily on cameras rather than radar or lidar, a design choice that has drawn both praise for simplicity and criticism for potential blind spots.

Crashes raise red flags

The investigation is tied to a series of crashes in which Tesla vehicles using FSD or related driver-assistance features reportedly failed to respond appropriately to road conditions.

According to regulators:

At least nine incidents are under review

These include one fatal crash and multiple injuries

In several cases, vehicles allegedly:

Failed to detect a leading vehicle

Did not brake in time

Continued operating despite degraded visibility

The agency is also examining whether Tesla’s system provides adequate driver alerts when conditions make automated assistance unreliable.

A system under scrutiny

Tesla’s “Full Self-Driving” name has long been controversial. Despite the branding, the system:

Does not make vehicles fully autonomous

Requires active driver supervision at all times

Regulators and safety advocates have argued that the name itself may contribute to driver overconfidence, potentially leading users to rely too heavily on the system.

The latest probe adds to a growing list of federal inquiries into Tesla’s advanced driver-assistance features, including:

Investigations into crashes involving emergency vehicles

Reviews of systems that allegedly allowed traffic violations

Prior recalls addressing software behavior and driver monitoring

What an engineering analysis means

An engineering analysis is not routine—it’s the stage where regulators conduct detailed technical reviews, analyze crash data and system performance, consult with manufacturers and determine whether a defect exists.

From here, NHTSA can:

Close the case with no action

Negotiate a voluntary recall

Or compel a recall if a safety defect is confirmed

Given the scale of this probe, any recall could affect millions of vehicles, making it one of the most consequential actions involving driver-assistance technology.

The stakes for Tesla—and the industry

The timing is significant. Tesla has been pushing forward with plans tied to autonomous driving, including:

Expanded FSD capabilities

Long-term ambitions for robotaxis

Increased reliance on software-driven vehicle features

A regulatory finding that the system has a safety defect could:

Force software redesigns

Slow deployment timelines

Increase regulatory oversight across the industry

It could also influence how other automakers design and market their own driver-assistance systems.

A broader question: how safe is “self-driving”?

The investigation highlights a central tension in modern automotive technology:

Driver-assistance systems are improving—but they remain imperfect.

Experts have long warned that these systems can perform well under ideal conditions but may struggle in edge cases—especially when sensors are impaired

The Tesla probe underscores concerns that consumers may not fully understand those limitations.

What happens next

NHTSA has not set a timeline for completing the engineering analysis, but the process can take months.

Key questions regulators will try to answer include:

Does the system fail in predictable ways?

Are those failures preventable?

Do they constitute a safety defect under federal law?

If the answer is yes, Tesla could face:

A large-scale recall

Mandatory software updates

Increased reporting requirements

What Is Tesla’s “Full Self-Driving”?

Despite the name, it’s not fully autonomous.

Tesla’s FSD system is classified as a Level 2 driver-assistance system, meaning:

The car can steer, accelerate, and brake

But the driver must remain engaged at all times

Key features

Adaptive cruise control

Automatic lane changes

Traffic signal recognition

Navigation on highways and city streets

Key limitations

Requires constant human supervision

May struggle in poor visibility

Can make unexpected or incorrect decisions

Bottom line: It’s an advanced assist system—not a self-driving car.

TIMELINE: Tesla and Federal Safety Scrutiny

2016–2018

Early Autopilot crashes draw national attention and initial investigations.

2021

NHTSA opens probe into crashes involving parked emergency vehicles.

2023–2024

Tesla issues recalls and software updates tied to Autopilot behavior and driver monitoring.

2025

New investigation launched into FSD performance in complex driving scenarios.

2026 (Now)

Probe escalates to engineering analysis, covering 3.2 million vehicles.

The Bottom Line

This is not just another regulatory inquiry.

It’s a critical test of whether today’s most advanced driver-assistance systems are safe enough for widespread use—and whether consumers fully understand their limits.

For Tesla, the outcome could shape the future of its self-driving ambitions.

For regulators, it may define how aggressively the government oversees the next generation of automotive technology.